Redisson Starter

Dive into Redisson - a powerful Java client for Redis.

Redisson is a feature-rich Java client for Redis, designed to offer high-level abstractions and easy integration with Java applications.

Why do we choose redisson?

While multiple Redis clients are available for Java, including Jedis, Lettuce, and Spring Data Redis, Redisson stands out due to its rich feature set, ease of use, and strong support for distributed systems. New: Redisson now supports valkey.

Clients Comparison

Below is a quick comparison of the most popular Java Redis clients:

| Feature/Client | Redisson | Spring Data Redis | Jedis | Lettuce |

| Distributed Objects | Rich set (Map, Set, List, Lock, Semaphore, etc.) | Not available | Not available | Not available |

| Distributed Locks | Built-in and easy to use | Needs manual implementation or extensions | No built-in support | No built-in support |

| Serialization | Customizable, supports many codecs | Limited to RedisTemplate configuration | Manual serialization needed | Limited |

| Spring Boot Integration | Dedicated starter and auto-configuration | Native support | Not provided out of the box | Not supplied out of the box |

| Thread Safety | Fully thread-safe | Depends on the underlying client | Not thread-safe | Thread-safe |

Based on this table and references, Redisson is a great choice.

References

Spring Boot Starter

In this starter, you'll learn how to use Redis with the Redisson library. All examples use Spring Boot 3.4.5 with Redisson 3.45.1.

ATTENTION: You can see full examples in this GitHub repo.

To get started, include the following dependency:

<dependency>

<groupId>org.redisson</groupId>

<artifactId>redisson-spring-boot-starter</artifactId>

<version>3.45.1</version>

</dependency>

While you can also use the redisson artifact directly, using the redisson-spring-boot-starter is recommended. It provides useful Spring Boot auto-configurations, health checks through Actuator, and additional Spring-specific integrations.

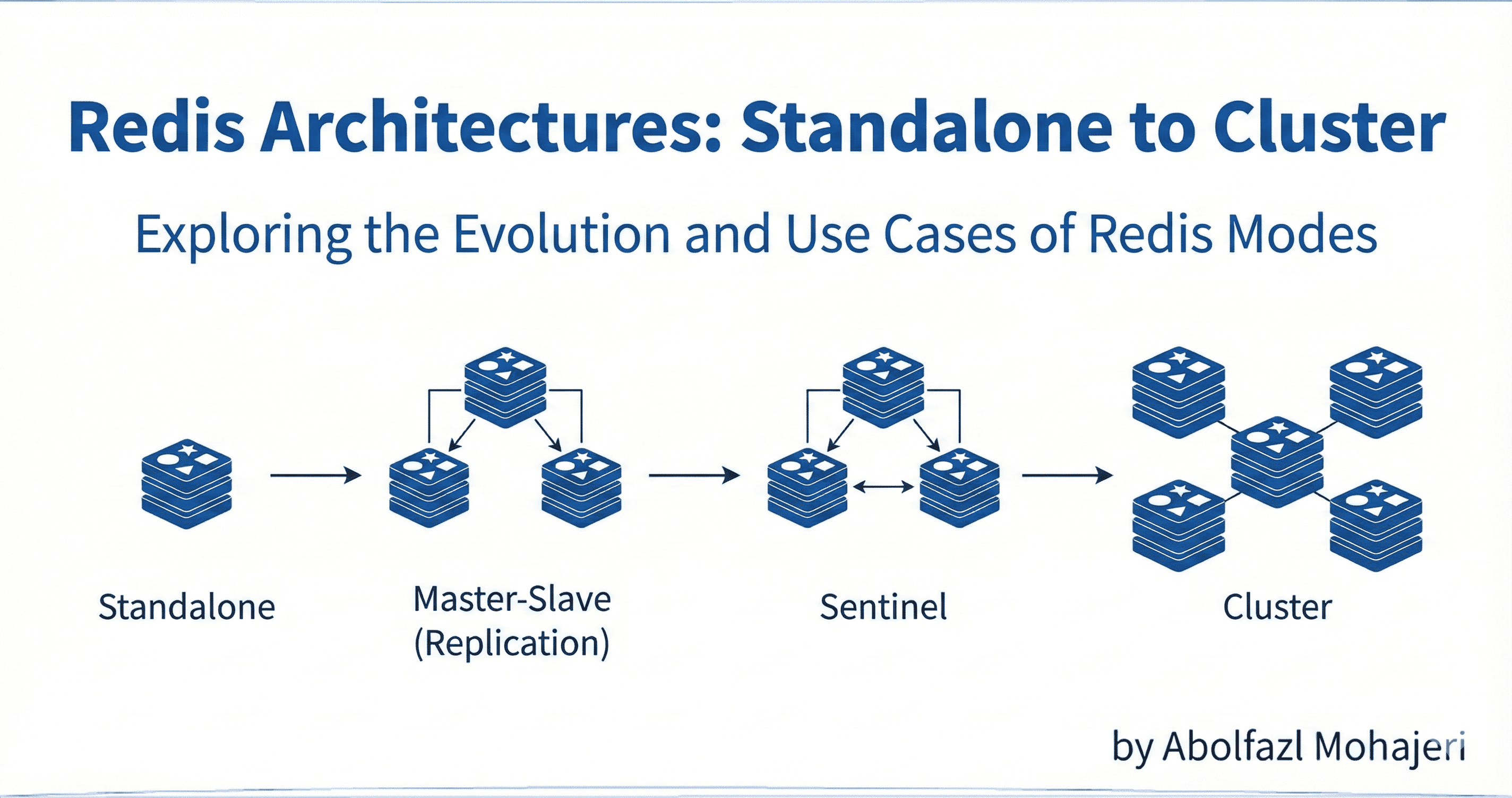

We will configure the Redis cluster based on the doc we provided before.

spring:

redis:

redisson:

file: classpath:redisson.yaml

In redisson.yaml:

clusterServersConfig:

There are a lot more important configs that you can set in this file that you can see in Appendix 1. Tuning these configs is based on your needs.

Important Notes

Connection Pool Size

- Ensure that the connection pool size is properly configured based on your workload. A pool that's too small can lead to connection timeouts under high load, while an oversized pool may waste resources.

Cluster Scan Frequency

- Avoid setting the scanInterval too low. Scanning the cluster too frequently can create unnecessary network overhead and impact performance, especially in large-scale deployments.

Ping Interval Configuration

- Frequent pings to maintain connections can lead to excessive traffic and latency. Tune the pingConnectionInterval based on your environment and latency tolerance.

References

Redis Health for Spring Boot App

Spring Boot Actuator provides a default Redis health indicator, but it offers very limited information. By default, it returns something like:

"redis": {

"status": "UP",

"details": {

"cluster_size": 3,

"slots_up": 16384,

"slots_fail": 0

}

}

To get more detailed insights—such as the health status of Redis slave nodes—you can implement a custom health indicator based on your needs. For example:

@Slf4j

@Component

@RequiredArgsConstructor

@FieldDefaults(level = AccessLevel.PRIVATE, makeFinal = true)

public class RedisMastersHealthIndicator implements HealthIndicator {

RedissonClient redissonClient;

@Override

public Health health() {

RedisCluster cluster = redissonClient.getRedisNodes(RedisNodes.CLUSTER);

Collection<RedisClusterMaster> masters = cluster.getMasters();

for (RedisClusterMaster master : masters) {

try {

if (!master.ping()) {

log.error("Redis slave node {} is DOWN", master);

return Health.down().withDetail("failedNode", master.toString()).build();

}

} catch (Exception e) {

log.error("Redis slave node {} is DOWN", master, e);

return Health.down().withDetail("failedNode", master.toString()).build();

}

}

return Health.up().withDetail("mastersChecked", masters.size()).build();

}

}

This custom indicator checks the health of Redis slave nodes and reports if any of them are unreachable.

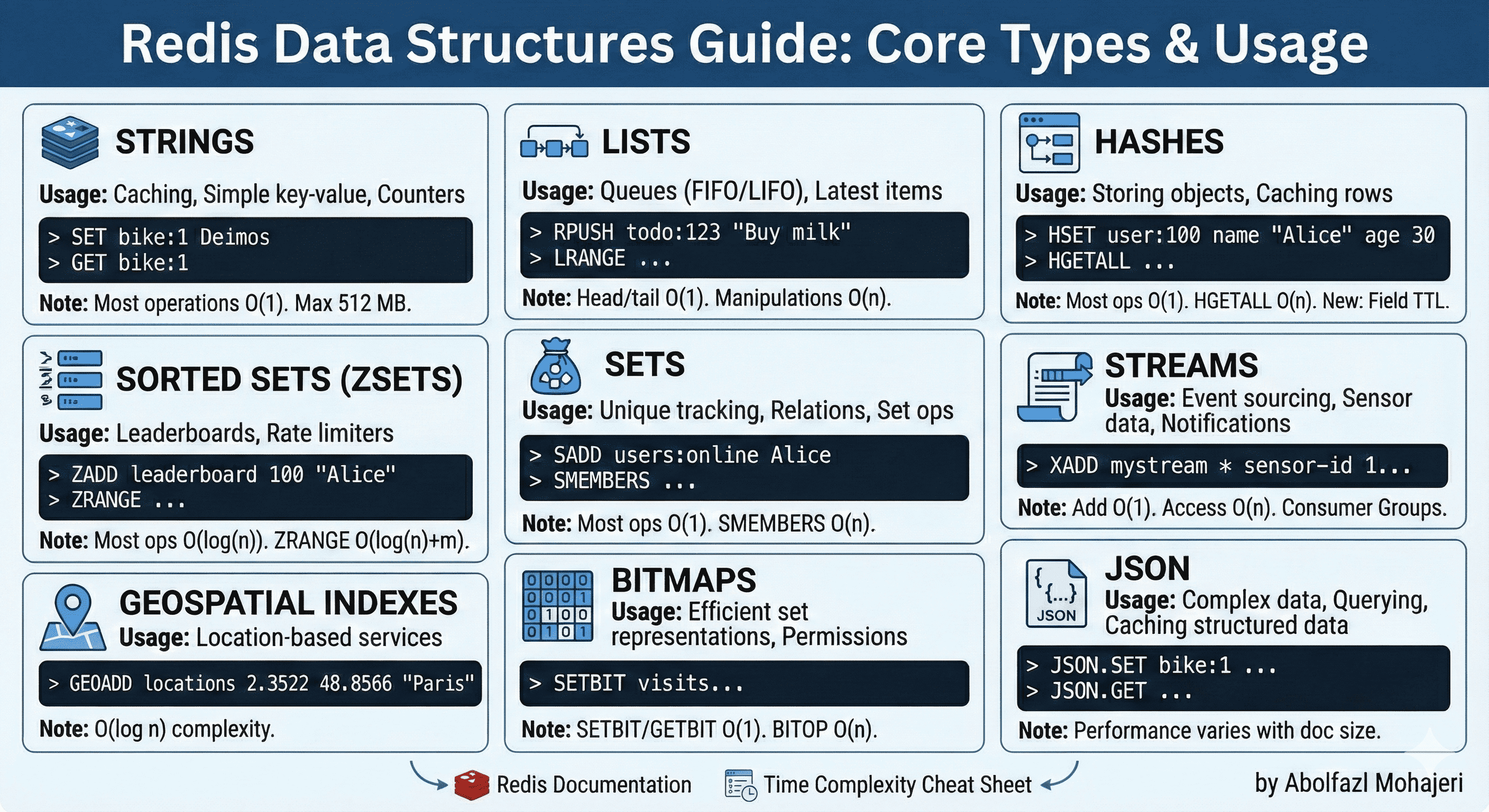

Distributed Collections (Data Structures)

These are redis data structures with redisson wrapper:

| Redis Type | Description | Redisson Equivalent |

| String | Basic key-value store. | RBucket |

| List | Ordered list of values. | RList |

| Set | Unordered unique values. | RSet |

| Hash | Key-value pairs within a key. | RMap |

| Sorted Set | Sorted by score. | RSortedSet |

| Geo | Geospatial indexes. | RGeo |

| Bitmaps | Bit-level operations on strings. | Not mapped, can use RBitSet |

Example of each:

RBucket<String> bucket = redissonClient.getBucket("myKey");

bucket.set("Hello, Redisson!");

System.out.println(bucket.get());

RList<String> list = redissonClient.getList("myList");

list.add("Apple");

list.add("Banana");

System.out.println(list.get(0));

RSet<String> set = redissonClient.getSet("mySet");

set.add("A");

set.add("B");

set.add("A");

System.out.println(set.contains("B"));

RMap<String, Integer> map = redissonClient.getMap("myMap");

map.put("views", 10);

System.out.println(map.get("views"));

RScoredSortedSet<String> scoredSet = redissonClient.getScoredSortedSet("mySortedSet");

scoredSet.add(9.5, "Alice");

scoredSet.add(8.0, "Bob");

System.out.println(scoredSet.first());

RGeo<String> geo = redissonClient.getGeo("myGeo");

geo.add(13.361389, 38.115556, "Palermo");

geo.add(15.087269, 37.502669, "Catania");

System.out.println(geo.dist("Palermo", "Catania", GeoUnit.KILOMETERS));

RBitSet bitSet = redissonClient.getBitSet("myBitSet");

bitSet.set(0, true);

bitSet.set(1, false);

System.out.println(bitSet.get(0));

Map & Set

Redisson provides various Map structure implementations with multiple important features (lots of them are only for redisson pro 🙁): (In appendix 2, you can read about the meaning of local cache and data partitioning)

No eviction

Available implementations:

getMap()

getLocalCachedMap();

Scripted eviction

Allows for defining time to live or max idle time parameters per map entry.

Eviction is done on the redisson side through a custom-scheduled task that removes expired entries using La ua script.

Available implementations:

getMapCache()

- map.putIfAbsent("key2", new SomeObject(), 40, TimeUnit.SECONDS, 10, TimeUnit.SECONDS);

Advanced eviction

- All for Redisson Pro

Native eviction

Allows for defining time-to-live parameters per map entry.

Doesn't use an entry eviction task, entries are cleaned on Redis side.

Requires Redis 7.4+.

Available implementations:

- getMapCacheNative()

Similar methods are available for RSet as well. For more details, refer to the documentation.

Danger: Don't Rely on Redisson TTL Alone. If TTL is critical to your business logic (e.g. session expiry, rate limiting, locks):

Always use Redis-native expiration.

Avoid relying on Redisson TTL if app uptime isn't guaranteed.

Solutions may:

RBucket and RMapCacheNative support redis ttl

Sometimes you can use the Redis key TTL instead of per-entry TTL.

- redissonClient.getKeys().expire("myMap", 10, TimeUnit.SECONDS);

Multimap

Multimap for Java allows binding multiple values per key. This object is thread-safe. Keys are limited to 4,294,967,295 elements.

Multimap distributed object for Java with eviction support implemented by separated MultimapCache object. There are RSetMultimapCache and RListMultimapCache objects for Set and List based Multimaps respectively. Eviction task is started once per unique object name at the moment of getting a Multimap instance.

RSetMultimap<Integer, Integer> set = redissonClient.getSetMultimap("mySetMultimap");

set.put(1, 1);

set.put(1, 2);

set.put(1, 3);

set.put(1, 3);

set.put(2, 1);

System.out.println("SetMultimap:");

set.keySet().forEach(key -> {

System.out.println(key + " => " + set.get(key));

});

RListMultimap<Integer, Integer> map = redissonClient.getListMultimap("myListMultimap");

map.put(1, 1);

map.put(1, 2);

map.put(1, 3);

map.put(1, 3);

map.put(2, 1);

System.out.println("ListMultimap:");

map.keySet().forEach(key -> {

System.out.println(key + " => " + map.get(key));

});

Danger: Redisson's RSetMultimap and RListMultimap can create many Redis keys, especially when you have many keys or values.

When you use:

RSetMultimap<Integer, Integer> map = redissonClient.getSetMultimap("mySetMultimap");

Redisson internally creates one Redis key for each map entry (and some metadata), like:

mySetMultimap:{key}

mySetMultimap, mySetMultimap:{1}, mySetMultimap:{2}, and …

This causes many problems, such as key growth if there are many keys/values, Slower operations for listing or scanning, and …

These are some solution for this:

Use RMap<K, Set<V>> instead of Redisson Multimap.

Distribute keys using modulo: multimap:<bucketId>.

Use RMap<String, String> with CSV/JSON values (Not Recommend)

Important Notes

Redisson allows you to bind listeners to certain collections, such as RMap. For more details, refer to the documentation.

Redisson also supports distributed queues.

Redisson also supports time series.

For some data structures choosing a good codec will save a lot of cost.

References

Distributed Locks

Redisson provides robust distributed locking mechanisms to ensure safe concurrent access in clustered or multi-pod environments.

You can acquire a lock using the following methods:

RLock.lock()

Blocks indefinitely until the lock is acquired.

Simple, but use it only when you're sure the lock will be released eventually.

RLock.tryLock(waitTime, leaseTime, timeUnit)

Attempts to acquire the lock:

Waits up to waitTime.

If acquired, holds for lease time and then auto-releases.

Safer than lock() in most distributed environments.

RReadWriteLock

Allows many readers OR one writer.

Useful when:

Multiple pods need concurrent read access.

Only one pod should perform write/update at a time.

RFairLock

Ensures first-come, first-served access to the lock.

Use when fairness (e.g., request order) is important.

RMultiLock

Combines multiple locks into a single logical lock.

Acquires all or none. Ideal when working with multiple related resources (e.g., account transfers).

Important Notes

Lock key should be unique per logical task (e.g. "lock:register:" + userId).

Always release locks in finally blocks to prevent deadlocks.

Avoid overusing locks (they add latency and reduce throughput)

Use locks only when multiple related keys or complex logic must be executed as a unit.

Favor atomic Redis operations when possible.

Atomic operations like INCR, SETNX, and HINCRBY are built-in, single-step commands in Redis.

They avoid the overhead of acquiring/releasing distributed locks.

By default lock watchdog timeout is 30 seconds and can be changed through Config.lockWatchdogTimeout setting.

Read full locks here based on your needs.

References

Distributed Tasks

Redisson supports distributed task execution using the RExecutorService, allowing tasks to be submitted and executed across multiple nodes in a cluster.

Here's a compact summary of the most useful Redisson Distributed Task methods:

submit(task)

- Submits a task for execution on any available node.

schedule(task, delay, unit)

- Schedules a one-time task to run after a delay.

Important Notes

registerWorkers is crucial when using Redisson's RScheduledExecutorService or RExecutorService.

It registers the local JVM process as a worker node that can execute submitted tasks.

executor.registerWorkers(...) tells Redisson: “This instance is available to run background tasks.”

WorkerOptions.defaults().workers(1) means: “Allow 1 background thread in this instance to execute submitted jobs.”

You can add listeners like TaskSuccessListener to options.

You can add runnable and callable.

You can use cron in the schedule method.

References

Pub/Sub

Java RTopic object implements Publish / Subscribe mechanism based on Redis Pub/Sub. It allows clients to subscribe to events published with multiple instances of RTopic objects with the same name. Listeners are re-subscribed automatically after reconnection or failover.

If you're not using Reliable Topic, any messages published while the client is disconnected will be lost. Use RReliableTopic if message durability is critical.

References

Streams

Redisson Streams provides a Redis-based implementation of the Redis Streams data structure, which is ideal for building scalable, event-driven systems.

Important Notes

Always ack() messages after successful processing to remove them from the pending list.

Redisson has more methods like pending and claim that you can use based on your needs.

References

General Important Notes

Before using Redis in your service, please review and follow these important guidelines:

**Evaluate if Redis is the right choice:

**Make sure Redis fits your architecture and use case. Redis is best suited for high-performance caching, real-time analytics, pub/sub messaging, distributed locks, and short-lived data. It is not ideal for storing large or critical persistent data unless configured with proper persistence and backup strategies.**Design for fault tolerance:

**Your service must handle Redis failures gracefully. Redis is an external dependency and can become temporarily unavailable.Always implement fallback mechanisms or default behaviors when Redis is down.

Avoid making your entire service dependent on Redis availability.

**Be careful with data eviction and TTLs:

**If you use Redis as a cache, always define appropriate TTL values to prevent stale data and memory exhaustion.- Do not assume Redis data will persist indefinitely.

**Use proper serialization and data structures:

**Choose an efficient serialization format and be consistent across services.

Use the right Redis data type for your purpose (String, Hash, List, Set, Sorted Set, etc.)**Monitor and observe Redis usage:

**Continuously monitor key metrics like memory usage, hit/miss ratio, latency, and connection counts.

Tools like RedisInsight and Grafana metrics can help.

Helpful Definitions

| Term | Meaning |

| High Availability | The system keeps working even if some parts stop |

| Fault Tolerance | The ability of a system to continue working even if some parts fail. It prevents total system failure |

| Failover | Switching to a backup system if the main one fails |

| Replication | Making copies of data from one server to another |

| Throughput | The amount of work or data a system can process in a given amount of time. |

| Latency | The time it takes for a request to travel from sender to receiver and get a response. |

| Scalability | The system’s ability to handle increased load by adding resources. |

Appendix

Appendix 1

Most important configs are:

- clientName:

Default value: null

Name of client connection.

- nodeAddresses:

- Redisson automatically discovers the cluster topology.

- readMode:

Default value: SLAVE

Set node type used for read operation.

Available values: SLAVE, MASTER and MASTER_SLAVE

- subscriptionMode:

Default value: MASTER

Set node type used for pub/sub operation.

Available values: SLAVE and MASTER

- scanInterval:

Default value: 1000

Applied clusters topology scans.

- pingConnectionInterval:

Default value: 30000

This setting allows for detecting and reconnecting broken connections, using the PING command.

Set to 0 to disable.

- slave/master/subscriptionConnectionMinimumIdleSize:

Default value: 24

Minimum idle connections amount per node/channels.

- slave/master/subscriptionConnectionPoolSize:

Default value: 64

Maximum connection pool size per node/channels.

- connectTimeout:

Default value: 10000

Timeout in milliseconds during connecting to server.

- idleConnectionTimeout:

Default value: 10000

If a pooled connection is not used for a timeout time and the current connections amount is bigger than the minimum idle connections pool size, then it will be closed and removed from the pool.

- subscriptionTimeout:

Default value: 7500

Defines subscription timeout in milliseconds applied per channel subscription.

- timeout:

Default value: 3000

Server response timeout in milliseconds.

Starts countdown after a command is successfully sent.

- retryAttempts:

Default value: 3

Error will be thrown if command can’t be sent to server after retryAttempts. But if it is sent successfully then timeout will be started.

- retryInterval:

Default value: 1500

Time interval in milliseconds, after which another attempt to send a command will be executed.

- failedSlaveReconnectionInterval:

Default value: 3000

Interval of Slave reconnection attempts, when it was excluded from an internal list of available servers.

On each timeout event, Redisson tries to connect to the disconnected server.

- failedSlaveNodeDetector:

Default value: org.redisson.client.FailedConnectionDetector

Defines the failed Slave node detector object which implements failed node detection logic via the org.redisson.client.FailedNodeDetector interface.

Available implementations:

org.redisson.client.FailedConnectionDetector

org.redisson.client.FailedCommandsDetector

org.redisson.client.FailedCommandsTimeoutDetector

- keepAlive:

Default value: false

Enables TCP keepAlive for connection.

- tcpNoDelay:

Default value: true

Enables TCP noDelay for connections.

- nettyThreads:

Default value: 32

Defines the number of threads shared between all internal clients used by Redisson.

Netty threads are used for response decoding and command sending.

0 = cores_amount * 2

- threads:

Default value: 16

Threads are used to execute the listener's logic of the RTopic object, invocation handlers of the RRemoteService, the RTopic object and RExecutorService tasks.

- codec:

Default value: org.redisson.codec.Kryo5Codec

Used during read/write data operations.

Several serialization implementations are available.

- lockWatchdogTimeout:

Default value: 30000

This prevents infinity-locked locks.

This parameter is only used if an RLock object is acquired without the leaseTimeout parameter.

Appendix 2

Here is a meaning of local cache and data partitioning:

Local cache

- So called near cache used to speed up read operations and avoid network round trips. It caches Map entries on Redisson side and executes read operations up to 45x faster in comparison with common implementation.

Data partitioning

- Although any Map object is cluster compatible its content isn't scaled/partitioned across multiple master nodes in the cluster. Data partitioning allows to scale available memory, read/write operations and entry eviction processes for individual Map instances in a cluster.